Projects

Advancing LLM interpretability and AI ethics.

Current Projects

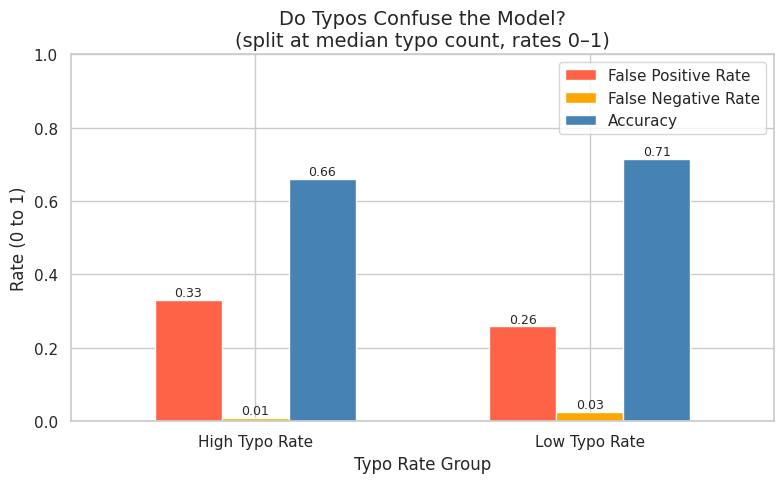

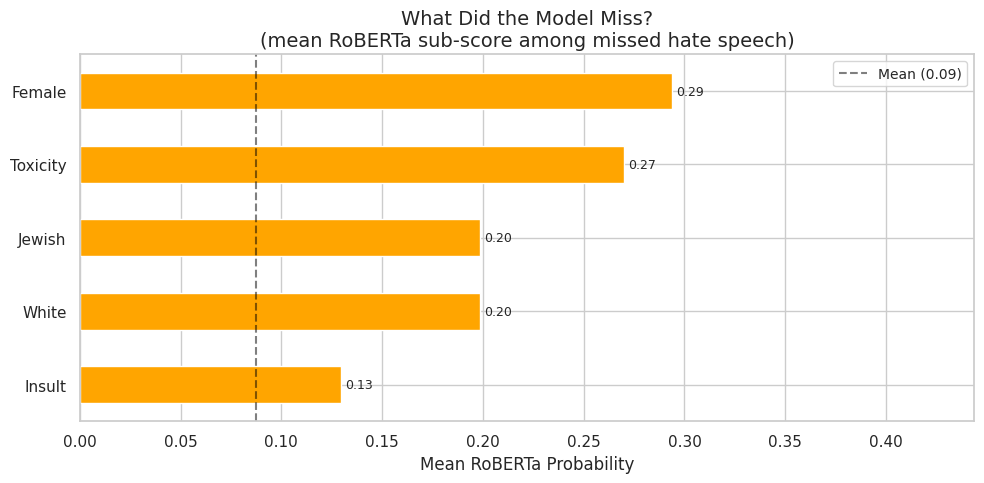

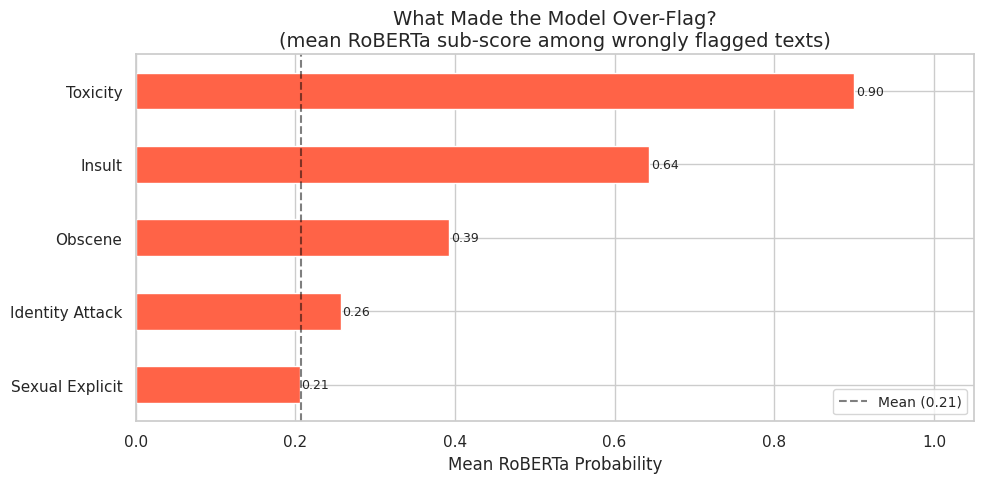

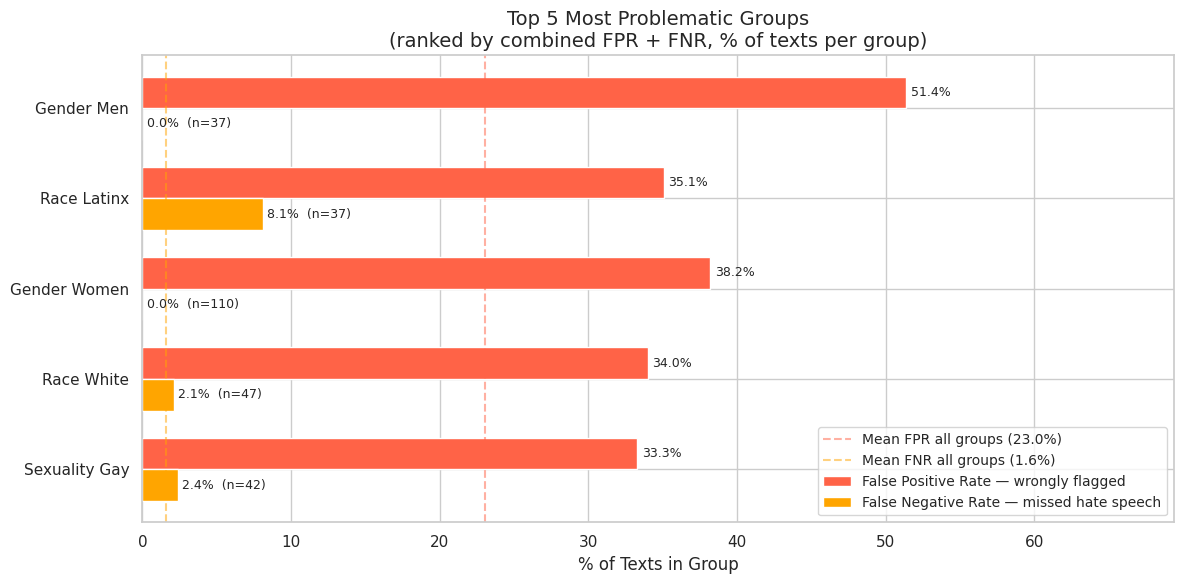

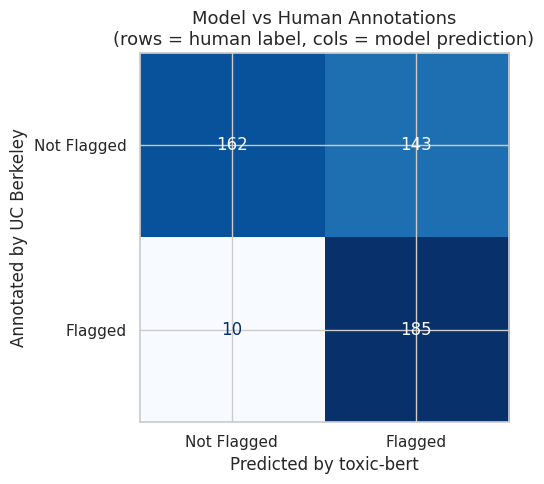

Explainable Censorship

Analyzing open-source moderation models to examine how they behave in ambiguous cases. Explores interpretability, calibration, and explanation techniques to improve transparency in automated content moderation using the UC Berkeley Measuring Hate Speech dataset.

Research Leads: Yiping Chen

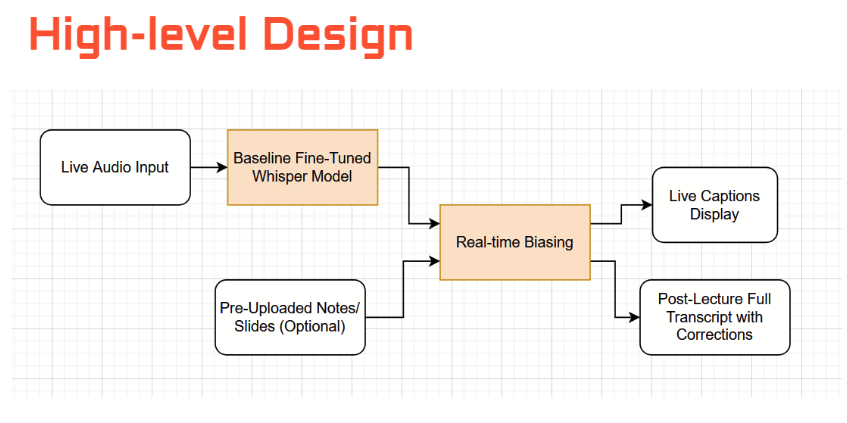

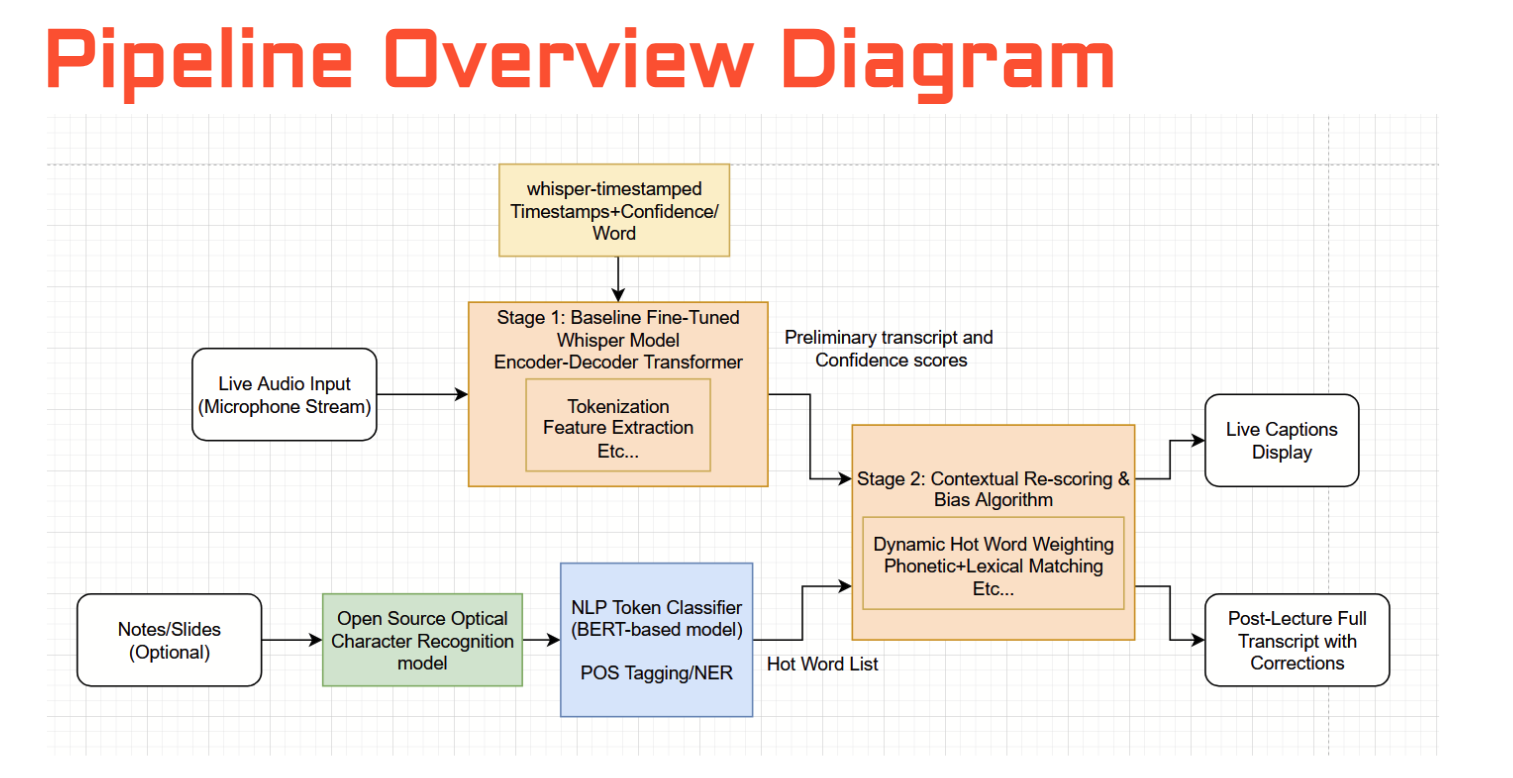

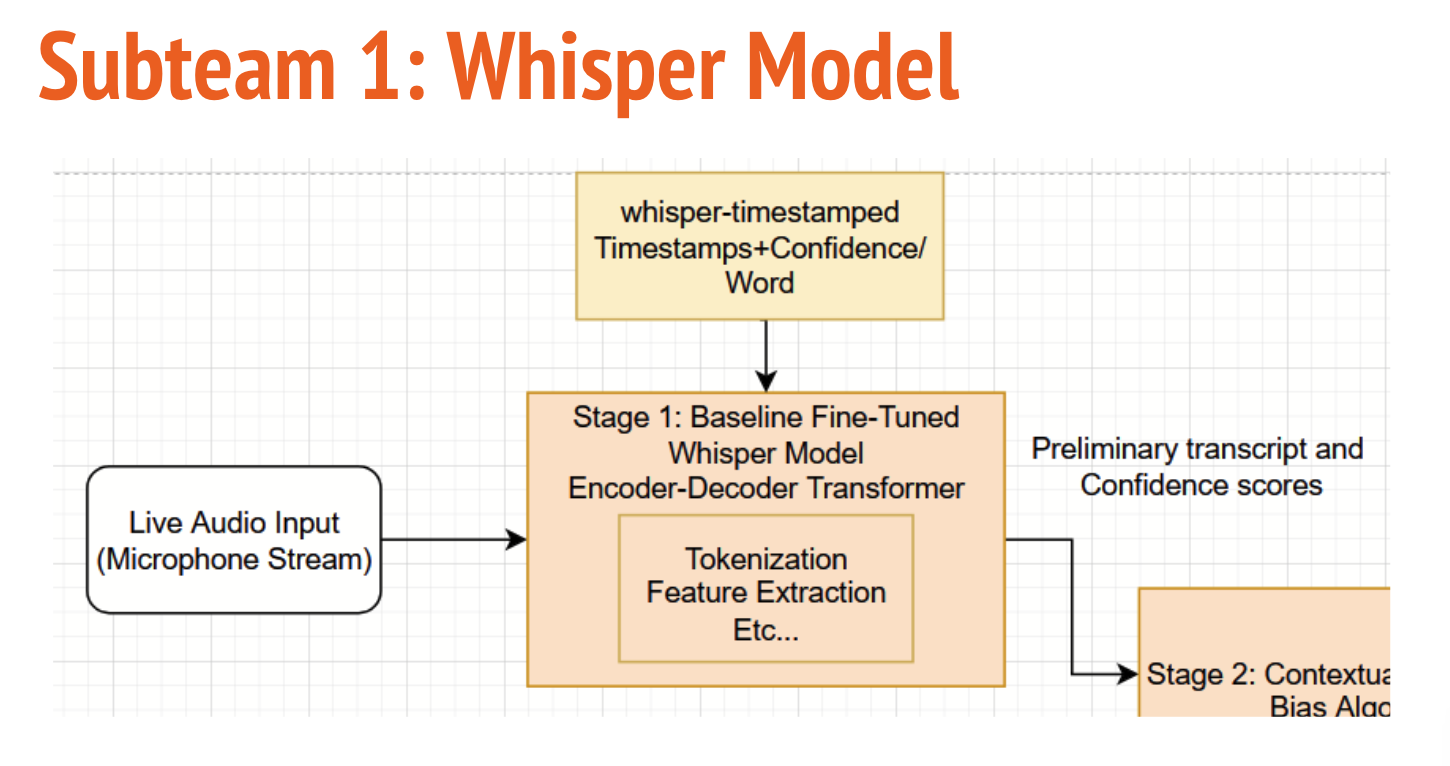

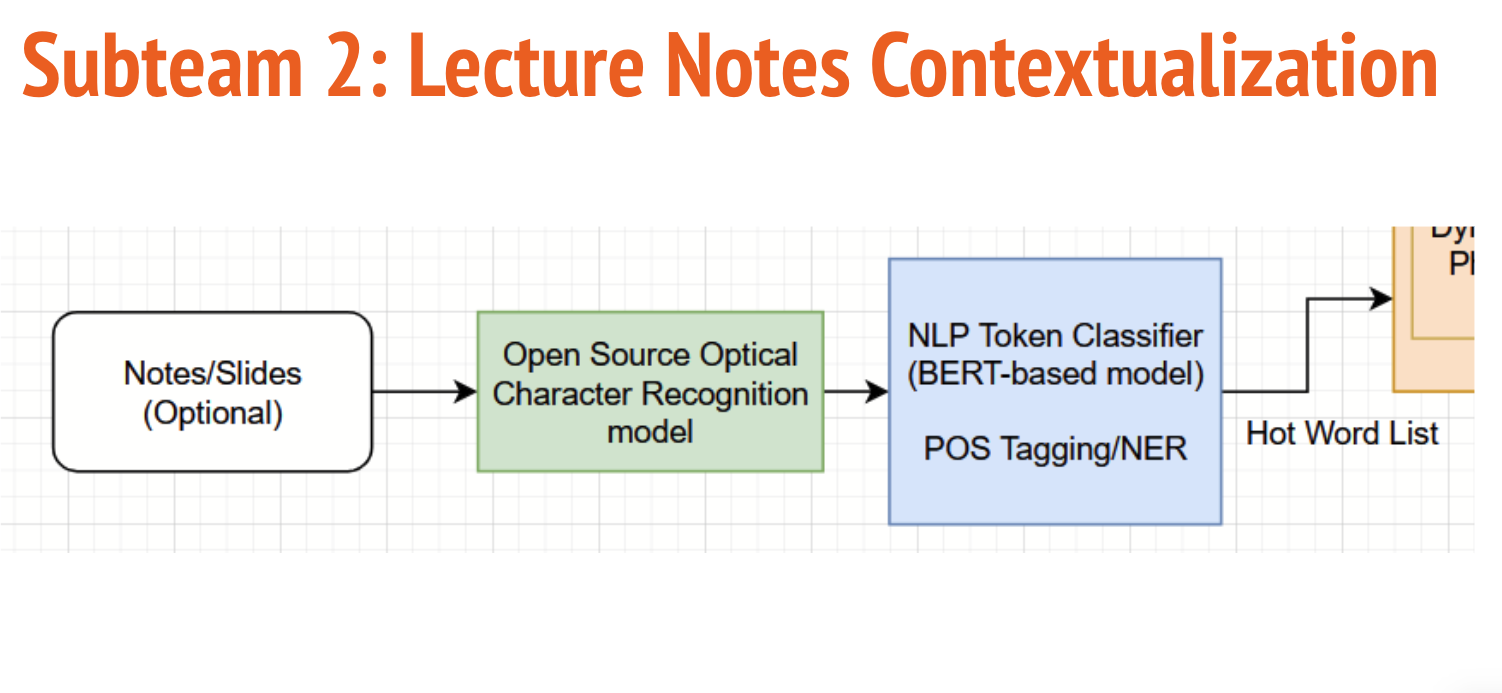

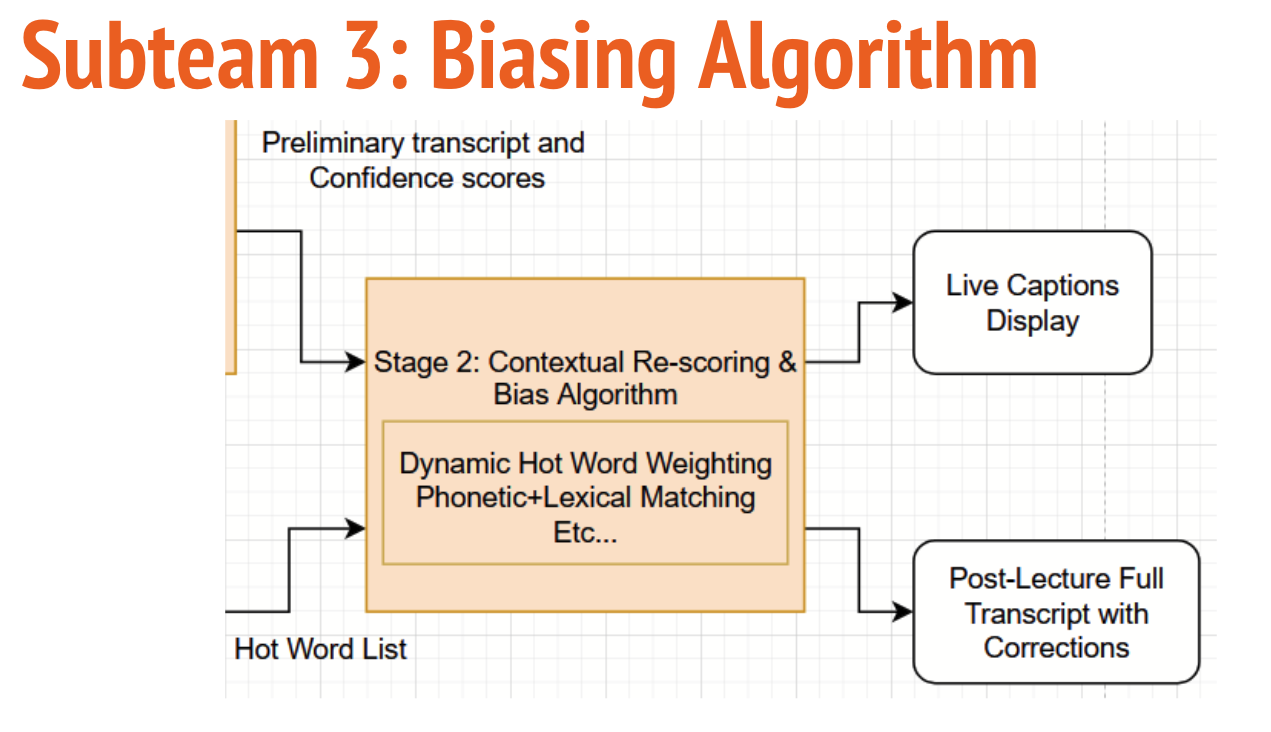

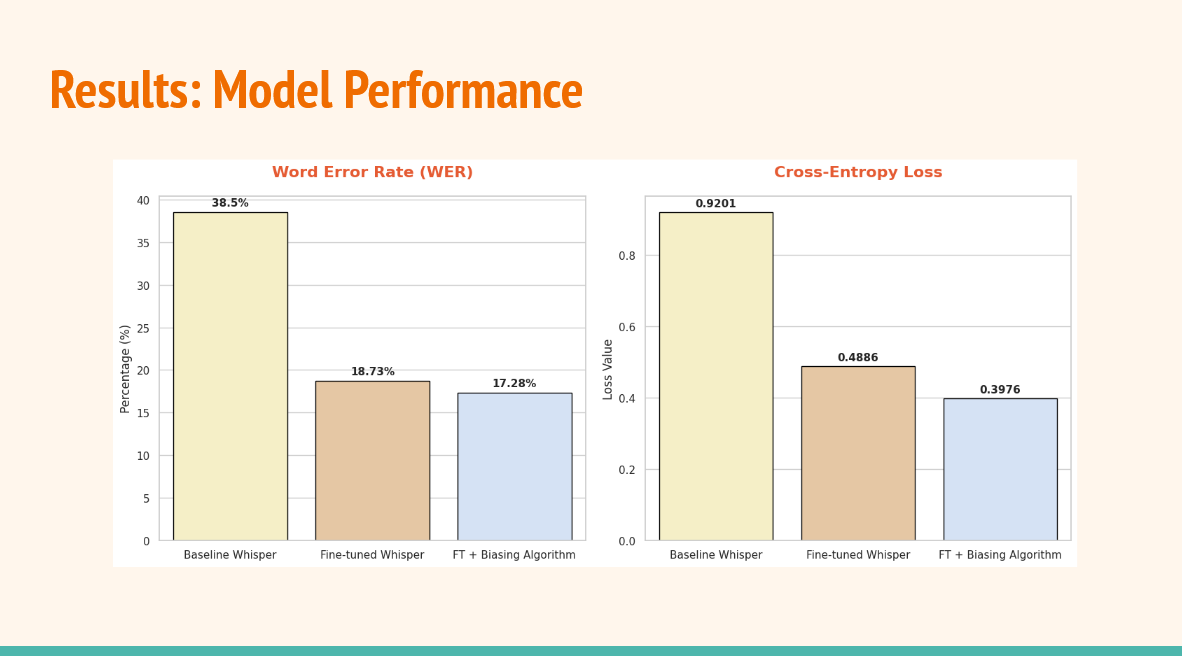

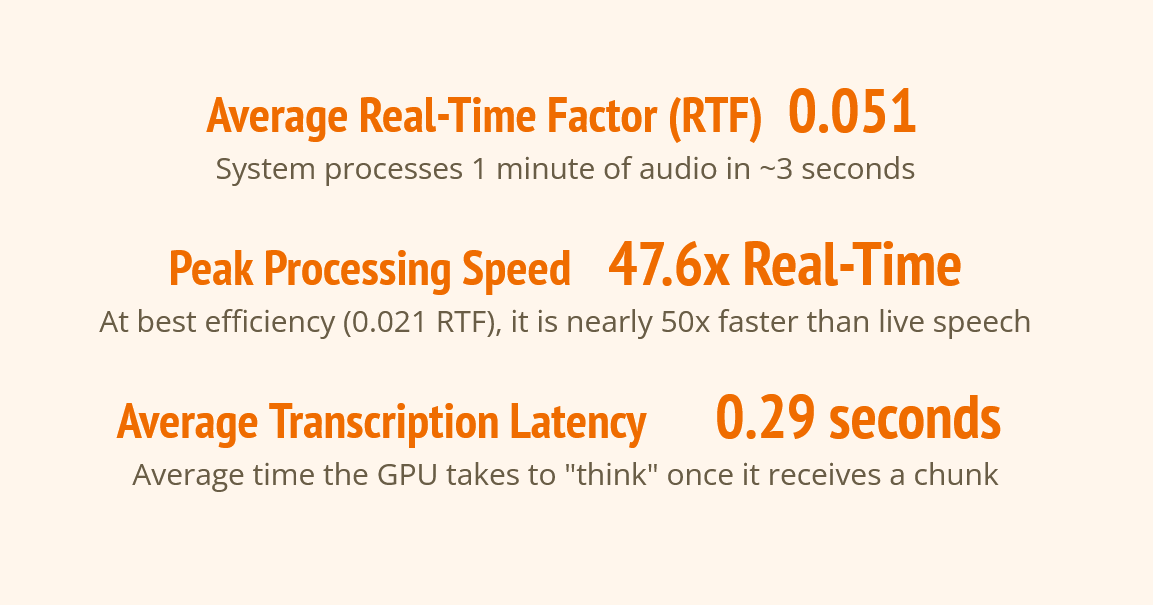

Context Based Captioning

Context-Based Captioning is a high-accuracy, live transcription system designed to master complex STEM terminology through a hybrid multi-stage architecture. By integrating course-specific materials via ModernBERT and Shallow Fusion, the pipeline dynamically biases distil-whisper to ensure technical accuracy in real-time university environments.

Research Leads: Christina Xie

Advising Professor: Steve Engels

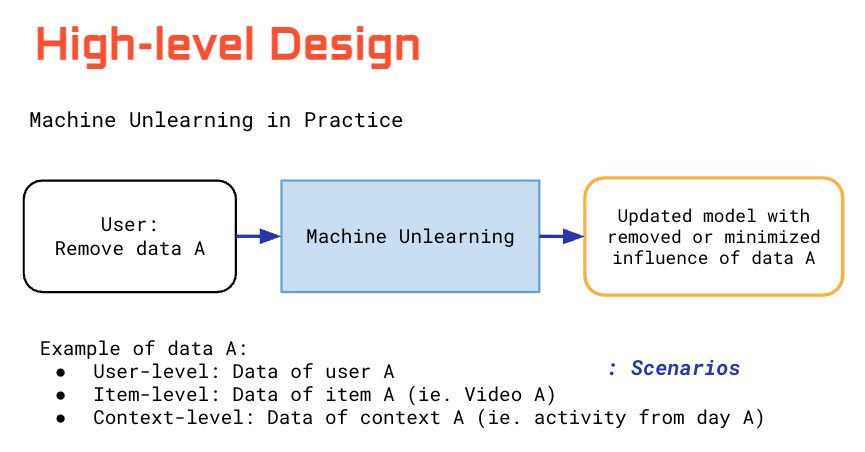

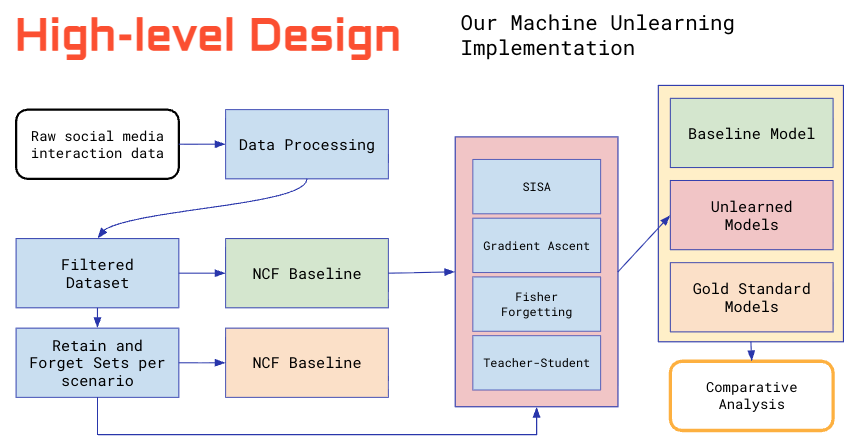

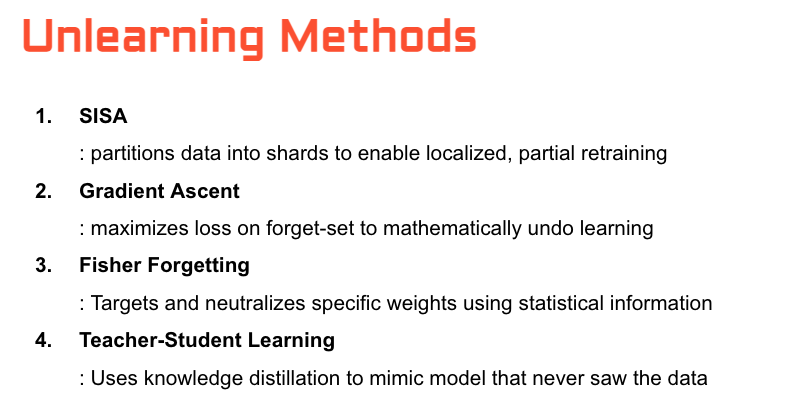

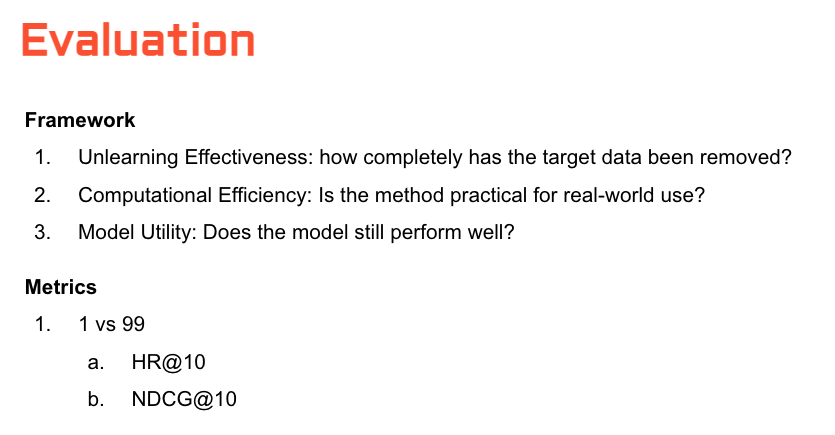

Machine Unlearning

Evaluating the performance trade-offs of diverse machine unlearning frameworks by simulating user data removal requests within social media recommender systems.

Research Leads: Seoyun Yang

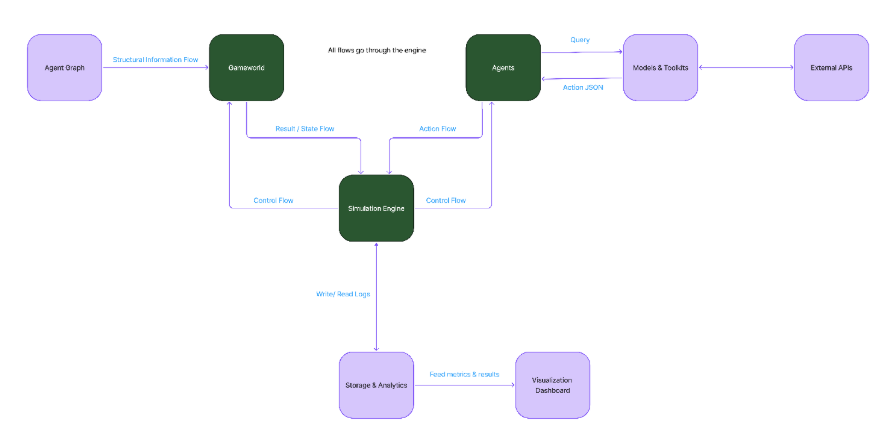

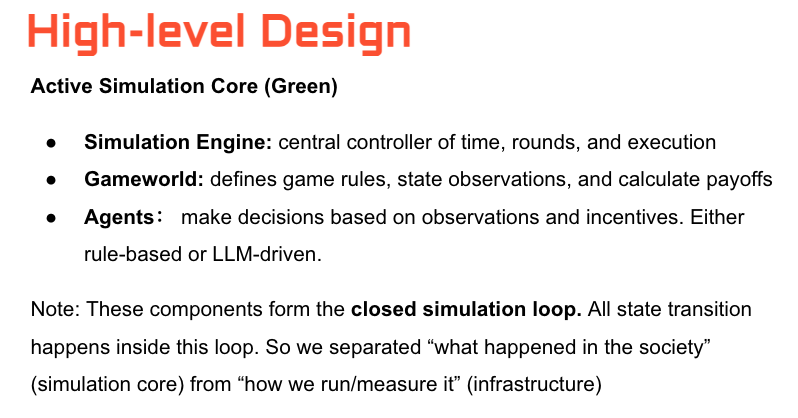

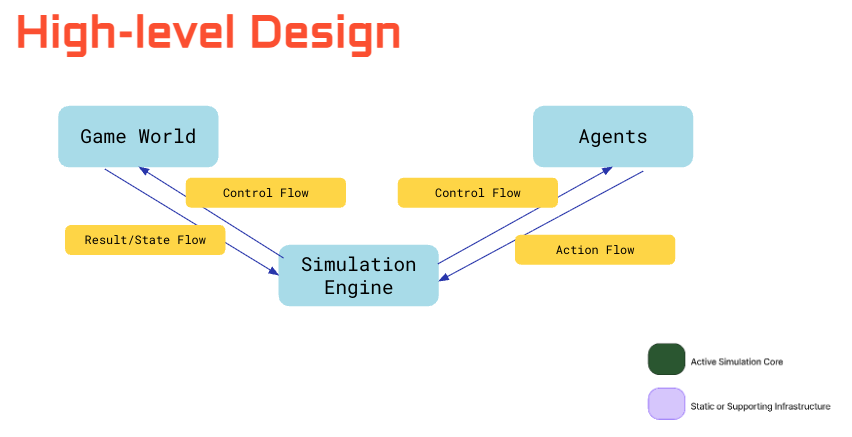

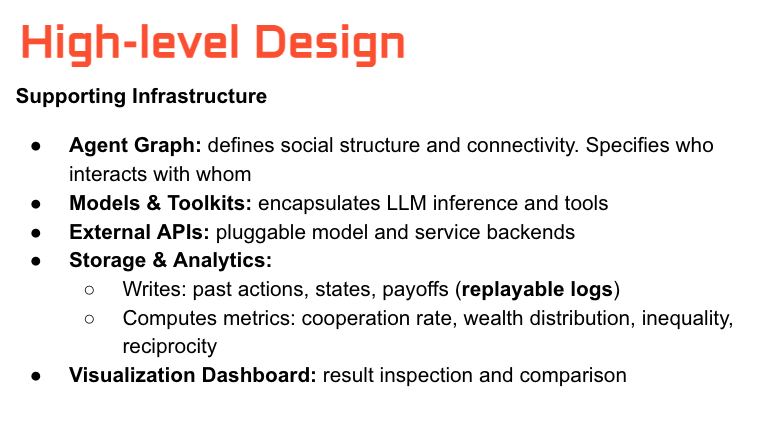

LLM Social Simulation

Studying collective behavior of LLM agents in multi-agent environments with shared resource constraints. Investigates cooperation vs. collapse dynamics, resource competition, social norm emergence, and governance mechanisms when agents compete over regenerating resources.

Research Leads: Clementine Yang